How MangoApps Surveys Reaches Every Employee — Not Just the Ones With a Desk

The typical employee engagement survey tells you how engaged your already-engaged employees are. The 80% of the global workforce that is deskless — factory floor workers, retail associates, field technicians, healthcare aides — rarely appear in the results, per Emergence Capital. They don't have a company email, don't log into the intranet, and often don't sit at a location where a browser notification reaches them during a shift.

This isn't primarily a participation problem. It's an architecture problem. The survey tools most HR departments use were built for knowledge workers. When you extend that architecture to a 500-person distribution center or a 200-bed hospital, the data you collect accurately represents a small portion of the workforce and goes silent on everyone else.

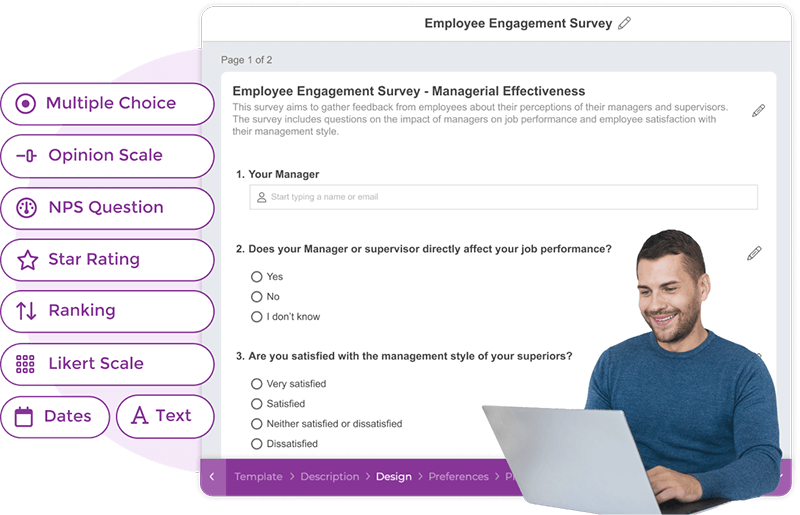

Three recent additions to the MangoApps Survey module address this gap directly: a Rule Builder for conditional question logic, expanded question types including Likert scale and NPS, and acknowledgement tracking for confirmed delivery. Together, they change who a survey program can reach — and what it can actually diagnose.

The architecture problem behind engagement measurement gaps

Gallup's research finds that only 32% of employees feel genuinely engaged at work. That number has barely moved in a decade. The issue isn't that organizations don't survey — most do. The problem is structural: the architecture of most survey programs systematically excludes the employees whose data would matter most.

Per Social Edge Consulting, only 13% of employees use an intranet daily, and nearly a third never log in at all. SWOOP Analytics benchmarks average daily intranet use at six minutes. A survey deployed inside a low-traffic portal reaches only the portion of the workforce that already checks in regularly — skewing the sample toward desk-based, already-engaged employees.

For organizations where the majority of staff are frontline workers, this creates a measurement bias that compounds over time. The employees who interact most directly with customers and operations are systematically absent from the data HR uses to set engagement strategy, prioritize programs, and make retention investments.

The Gallup 2026 State of the Global Workplace analysis documents the downstream consequences of this exclusion — including the correlation between frontline disengagement and customer satisfaction outcomes that organizations consistently underestimate when evaluating whether their survey programs are working.

Argument 1: Conditional logic turns a survey into a diagnostic instrument

The Rule Builder allows administrators to define logic rules that route respondents to different pages or questions based on earlier answers. The practical effect: a single survey behaves differently for each respondent, without requiring multiple survey versions or manual segmentation after the fact.

An employee who rates workload satisfaction as "Very dissatisfied" is automatically routed to an open-ended follow-up — "What one change would make the most difference to your experience?" — while an employee who rates it "Satisfied" skips that page entirely. The dissatisfied respondent generates root-cause data. The satisfied respondent completes the survey in 90 seconds instead of four minutes.

This matters because survey length is the primary driver of frontline non-participation. Frontline employees aren't disengaged from giving feedback — they're disengaged from long surveys deployed through channels that don't fit how they work. A conditionally adaptive survey that adjusts to prior answers reduces time burden for most participants while generating diagnostic depth from the respondents most likely to leave.

Conditional logic also extends survey function into action. An employee who flags a safety concern mid-survey can be routed to the appropriate reporting channel before they exit — not in a follow-up email that arrives after the moment has passed. Custom survey URLs allow direct distribution through push notification, team chat, or a QR code posted in a break room, removing the navigation barrier entirely for workers who don't start their shift at a browser.

Argument 2: Question type determines what you can benchmark across cycles

Most organizations survey for engagement without using the formats that produce benchmarkable data. The question type additions to MangoApps Surveys close the three gaps most likely to limit what an HR team can do with results over time.

Likert Scale is the standard format for validated engagement instruments, including most proprietary HR benchmarking tools and validated psychometric instruments like the Maslach Burnout Inventory. Without it, engagement data cannot be compared against industry norms or trended consistently across survey cycles. A custom 1-5 satisfaction scale is not a Likert scale — the validated properties only hold for specific phrasing and response anchor formats the format defines.

NPS applied to employees — eNPS — generates a single score that functions as a proxy for overall engagement: whether an employee would recommend the organization as a place to work. It benchmarks against published industry averages and is stable enough to track quarterly without creating survey fatigue for respondents.

Ranking questions capture relative preference among options in a way multiple-choice cannot. When a benefits team wants to know whether employees value schedule flexibility more than additional PTO more than childcare support, ranking produces that information directly. Binary choices produce frequency data; ranking produces preference ordering, which is what resource allocation decisions actually require.

The format choice matters most at scale. A manufacturing organization with 800 employees surveying quarterly needs formats that produce comparable results each cycle. Switching question formats between cycles breaks trend lines and makes it impossible to determine whether a change in scores reflects a change in sentiment or a change in measurement. Adding Likert and NPS at the module level means HR can deploy validated instruments consistently without rebuilding question logic for each survey cycle.

Argument 3: Acknowledgement tracking closes the loop most programs leave open

Sending a survey is not the same as reaching employees. Acknowledgement tracking confirms which specific employees have viewed a survey or policy update — enabling administrators to generate a targeted list of non-responders and follow up through the channel that will actually reach them.

For regulated environments — healthcare, financial services, government contractors — this is a compliance requirement, not a convenience feature. Auditors ask for documentation of who received, viewed, and acknowledged a communication. Email threads don't provide that reliably. A managed platform with acknowledgement tracking produces the audit trail compliance programs require without manual recordkeeping.

The application outside regulated industries is equally direct. A safety communication sent to all warehouse employees should generate a confirmed-received list within 24 hours. When it doesn't, acknowledgement tracking identifies the specific gap — not a general participation rate, but a list of names — so a shift supervisor can follow up through direct conversation or posted notice that will actually reach those individuals.

The American College of Radiology case study covers how a distributed, specialized healthcare workforce deployed unified communication infrastructure to close exactly this gap — from broadcast announcement to confirmed receipt, at the department level.

Why platform architecture is the leverage point

The three feature additions matter individually. They matter more because of where they live.

Per IDC, employees in information-intensive roles spend 2.5 hours per day searching for information they can't locate in one place. In frontline settings, that overhead maps to coordination gaps: messages that don't arrive, approvals that sit in the wrong queue, feedback that never surfaces because the tool to collect it is in a separate system from the communication channel employees actually use.

MangoApps Surveys operates within the broader MangoApps platform. When a department's engagement survey reveals low scores on "I understand how my work connects to company goals," that signal sits alongside communication data, shift management records, and workforce analytics in the same environment. The survey finding doesn't require a manual handoff between systems to become an operational response.

A standalone survey tool produces a report. A survey embedded in a unified platform produces an action trigger — informing which content gets prioritized, which managers are flagged for follow-up, and which programs are extended to which employee segments. For organizations evaluating whether their current survey program is generating data or generating change, the difference between those two states is structural. It isn't resolved by better question design alone.

What outcomes indicate the structural change is working

Three metrics indicate whether the feature additions are producing the intended result after deployment.

Participation rate by workforce segment. Track response rates for frontline and desk workers separately. A sustained gap between segments after deployment indicates access configuration still needs adjustment — likely that mobile-first survey delivery hasn't been extended to all frontline job classes, or that survey notifications are still routing through desktop channels.

Conditional depth rate. The share of respondents routed to open-ended follow-up pages by the Rule Builder. A program where fewer than 10% of respondents hit a conditional question is either reaching a highly satisfied population or the trigger thresholds are calibrated too conservatively. This metric catches logic calibration issues before an entire survey cycle produces shallow diagnostic data.

Time to visible action. Days between survey close and an organizational response — a named policy change, a targeted communication to the relevant team, or a committed follow-up with a timeline. Gallup's research is consistent: employees who have seen feedback lead to a specific, named change at least once participate in subsequent surveys at materially higher rates. The 2026 HR Trends eBook covers the implementation choices that move this metric most reliably for organizations deploying structured feedback programs at scale.

Organizations that treat employee engagement as a data-collection exercise rather than a feedback loop typically see declining participation across cycles, regardless of how much the survey technology improves. Closing the loop requires communicating what the survey found and naming what the organization is doing about it — specifically enough that the employees who responded recognize the connection.

The employees who weren't counted before

The argument for the Rule Builder, expanded question types, and acknowledgement tracking isn't primarily about better survey design. It's about who gets counted.

An engagement survey that excludes 80% of the workforce — the portion that is deskless, per Emergence Capital, and unreachable through desktop-first survey tools — doesn't measure engagement. It measures the engagement of the employees most likely to already be engaged. Investing based on that data leads organizations toward programs that serve desk workers while frontline attrition, safety gaps, and satisfaction problems go unmeasured and unaddressed.

For organizations where frontline workers represent the majority of staff, the updated MangoApps Survey module doesn't change what employee engagement surveys ask. It changes who can answer — and through what channels that answer arrives. That is the operational problem the feature additions are designed to solve.

Recent from the Wire

All posts-

# The Frontline Tax: What You're Paying to Ignore 80% of Your Workforce Eighty...May 04, 2026 · Vishwa Malhotra

-

We talk to internal communications leaders constantly. And one thing comes up in...Apr 30, 2026 · Andy Tolton

-

Why fragmentation is the silent killer of enterprise execution?Apr 23, 2026 · Vishwa Malhotra

The MangoApps Team

We're the product, research, and strategy team behind MangoApps — the unified frontline workforce management platform and employee communication and engagement suite trusted by organizations in healthcare, manufacturing, retail, hospitality, and the public sector to connect every employee — deskless or desk-based — to the people, tools, and information they need.

We write about enterprise AI for the workplace, internal communications, AI-powered intranets, workforce management, and the operating patterns behind highly engaged frontline teams. Our perspective is grounded in a decade of building for frontline-heavy industries and shipping AI agents, employee apps, and integrated HR workflows that real employees actually use.

For short-form takes, product news, and field notes from customer rollouts, follow Frontline Wire — our ongoing stream on AI, frontline work, and the modern digital workplace — or learn more about MangoApps.